This page has been permanently moved. Please

CLICK HERE to be redirected.

Thanks, Craig.

Only a fool would challenge the ascertain that Oracle's wait interface has not profoundly changed the terrain of Oracle performance diagnosis. But sometimes we push the wait interface too far and expect too much. In this blog entry I will demonstrate that using the wait interface to determine the number of non buffer cache reads (i.e., "physical" reads) can not be satisfactorily determined solely through the wait interface. But I will also provide a solution to this problem.This also implies that based solely on Oracle's wait interface, determining the total physical IO read time can not be satisfactorily determined by simply multiplying the number of physical IO read related wait events occurrences by their respective average wait time. An alternative solution will be presented in a future blog entry.

From a broader performance perspective this is important because many Oracle performance diagnostic methods, tools, and products use Oracle's wait interface to determine the total IO read wait time which feeds into the total non-idle wait time. But as my research demonstrates and as strange as it seems, if you want the real total IO read wait time you must use information from both the classic instance activity views (i.e., v$[sess,sys]stat) and the wait interface views, or use a creative sampling strategy.

Expecting Too Much From the Wait Interface

One such example of expecting too much is using the wait interface to determine the number of non buffer cache reads. I typically call these non buffer cache reads "physical IO reads", "physical IO", or simply "PIO".

There are actually a number of physical read related wait events. In addition to the classic db file sequential read and db file scattered read, there is also direct path read, direct path read temp, db file parallel read, control file sequential read, and log file sequential read. There are others and I'm sure Oracle will add more, but these are ones that are likely related to significant amounts of time. For typical Oracle wait event descriptions, click here.

To hypothetically demonstrate this, suppose db file sequential read and db file scattered read waits occurred 1.2M times over a one hour period and there are no other physical IO read events occurring. We would expect to see the instance statistic (v$sysstat) physical read total IO requests to be 1.2M and tracing the processes at the OS level to also show 1.2M read system calls. While this can occur, as I will demonstrate below and you can check on your system, don't count on it!

This mismatch occurs because Oracle does not fully instrument its physical reads, which currently on Linux can be the implemented through the pread64 or readv system calls. In this blog entry I will demonstrate this mismatch and provide a script so you can demonstrate this on one of your own systems.

How Oracle Retrieves & Times Non Oracle Cache Reads

When a server process requires an Oracle block it checks the cache buffer chain structure to determine if the block currently resides in the buffer cache. If the block does not reside in the buffer cache the Oracle process must ask the operating system for the block. To ask for the block on a Linux system Oracle may issue a pread64 or a readv call. If Oracle instrumented the call, we can query details about the read call through Oracle's wait interface.

We can see an example of how Oracle both instruments the read call and actually makes the read call by operating system tracing a server process. Here is an example of tracing an active server process performing a physical multi block IO request.

$ strace -rp 14876

0.000027 gettimeofday({1290553854, 957524}, NULL) = 0

0.000028 pread64(13, "\6\242\0\0p\36\0\1..."..., 131072, 63832064) = 131072

0.000095 gettimeofday({1290553854, 957647}, NULL) = 0

Process 14876 detached

Shown above will provide the server process with the requested blocks and the related timing information. Oracle give this read the name db file scattered read with a wait time of just over 0.095ms. I provide much more detail about this process in my book, Oracle Performance Firefighting and also in my training course titled, Oracle Performance Firefighting.

If Oracle, for whatever reason, did not instrument the pread64 call, that is it did not issue the gettimeofday calls and record their time difference, the read call would obviously occur but we would not see its occurrence and the related timing information through Oracle's wait interface.

The Call Occurrence Mismatch

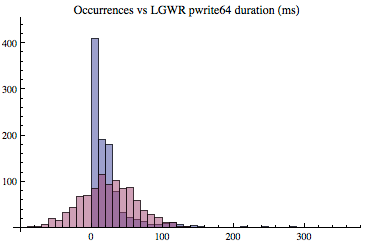

My experiments gathered IO read wait occurrences from three different sources; through Oracle's wait interface, Oracle's instance statistics views, and by operating system tracing an Oracle server process. Through Oracle's wait interface, the number of waits can be determined through the total_waits column in v$system_event and v$session_event. Through Oracle's instance statistics, the number of read calls (not the number of blocks) can be determined by referencing the statistic physical read total IO requests. On Linux, the number of read system calls can be determined by operating system tracing a process using strace, grep'ing for readv and pread64, and then counting their occurrences.

For my session level experiment I created a simple script that can be downloaded here. Here is an actual output file for a very small run; gathering only six, 30 second samples.

sample stracecnt sesstatcnt waitcnt

1 2215 2215 2215

2 4242 4238 4237

3 4285 4283 4276

4 4287 4287 4286

5 3528 3383 3397

6 2180 2180 2180stracecnt is count of readv and pread64 systems calls gathered by running strace

sesstatcnt is the number of v$sesstat, physical read total IO requests

waitcnt is the sum of total_waits from v$session_event

Remember all the data is related to a single server process and the v$sesstat and v$session_event counts represent the deltas, that is, the difference between the ending value and the beginning value. If you're not on Linux, you may need to make some shell script modifications.

My conclusions where not taken from the above six 30 second samples. My conclusions are based on correspondence with some trusted colleagues and by running the script on my Oracle 11R2 Linux four CPU core system and collecting sixty samples at 900 seconds each for a single server process with a majority of direct path read wait occurrences and wait time. For each sample the server process issued around around 221K IO read calls. The average number read system calls (readv, pread64) was 221,286 and the average number of read calls recorded from Oracle's instance statistics view v$sesstat (physical read total IO requests) was 221,289. The average difference was only 3 calls, which could easily be accounted for by how I collected the data. You can see the actual experimental output here.

This experiment and correspondence with Gopal Kandhasamy (co-author of Oracle Wait Interface: A Practical Guide to Performance Diagnostics & Tuning), indicates Oracle's instance statistics views are a reliable source for IO read occurrences.

While it appears Oracle's instance activity views are a reliable sources for IO read call occurrences, is Oracle's wait interface also a reliable source for the number of IO read calls? On my test system the wait interface occurrences closely matched data from both the instance statistic view and from strace. However, looking at real production systems I tend to see something quite different. For example, I randomly picked three Oracle 10G Statspack/AWR reports at my disposal and this is what I found:

Ref: SG, Oracle 11.2

wait interface physical read related waits occurrences 1,082,064

instance statistic, physical read total IO requests 1,521,403

Event occurrences to instance statistic ratio: 0.71

Ref: BF, Oracle 10.2

wait interface physical read related waits occurrences 190,272

instance statistic, physical read total IO requests 230,383

Event occurrences to instance statistic ratio: 0.83

Ref: PROD25, Oracle 10.2

wait interface physical read related waits occurrences 156,563

instance statistic, physical read total IO requests 156,894

Event occurrences to instance statistic ratio: 1.00

Based on the three observations above, other observations from other systems, data from some of my colleagues, and correspondence with Gopal Kandhasamy (co-author of Oracle Wait Interface: A Practical Guide to Performance Diagnostics & Tuning) indicates Oracle's wait interface is not a reliable source for IO read occurrences.

What about timing information?

What I have presented above is specifically related to the number of occurrences, that is, the count. If this is not troubling enough, consider the fact that if the number of occurrences is low, then determining the total IO related read time will also be low. And therefore any method, analysis, script, tool, or product using wait interface occurrences will produce a low total wait time value. Stated plainly, for read IO related wait events, the column v$[session,system]_event.time_waited is likely under reported.

While the total IO read wait time appears to be under reported, the average wait time appears to be solid. When a read call is instrumented, Oracle of course records the time along with the occurrence providing the data required to calculate an average. Also, when comparing IO read wait time with operating system tools such as iostat and also with observing the read system call times, it appears Oracle does record the correct average wait time. I will be blogging about this in my "average" blog series.

It is more difficult to calculate the total IO read time waited than you might think. This difficultly exists because there are multiple read wait events and they don't all have an obvious instance statistic to easily determine their count. For quantitative Oracle performance analysis it is important to get a respectable total IO read time, so I am working on a solutions which, I plan to blog about soon. Stay tuned...

Concluding Thoughts

Oracle's done a great job instrumenting its kernel code. Such a great job in fact, that most performance analysts rely heavily on the data it provides. But the wait interface is limited when kernel developers limit its use. This blog entry demonstrates one such limit, specifically using the wait interface column total_waits to determine the number of IO read call occurrences.

After Posting Note: Please read Part 2 as it has additional details that bring some closure to this topic.

Thanks for reading!

Craig.

P.S. If you want me to respond to a comment or have a question, please feel free to email me directly at craig@orapub .com. I use a challenge-response spam blocker, so you'll need to open the challenge email and click on the link or I will not receive your email.